Why clinical trials fail?

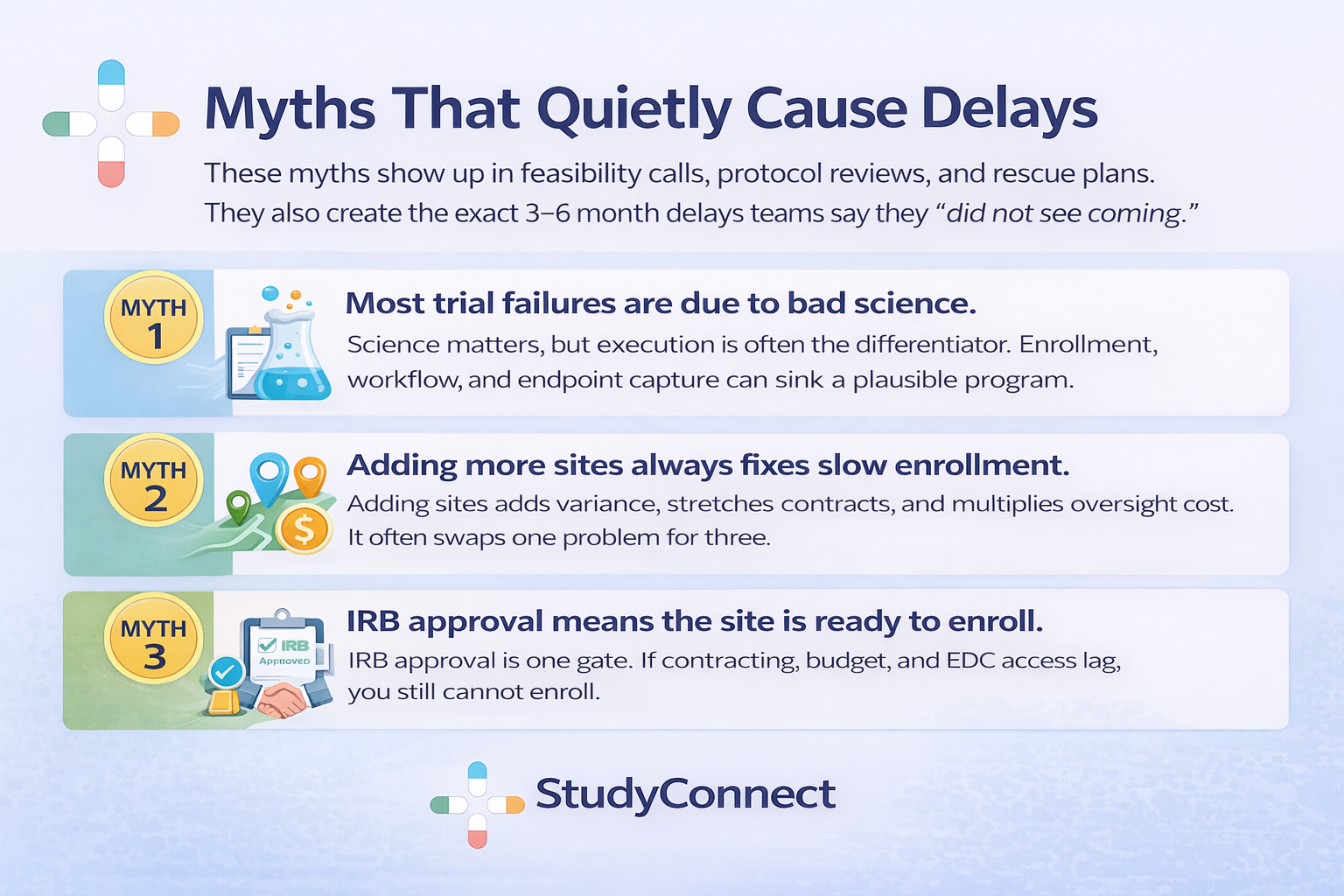

Clinical trials fail more often than most teams expect. And a painful number of failures are not “bad science” problems. They are process problems you can spot early and fix fast. This blog breaks the mistakes down by trial stage, with practical prevention steps.

Why clinical trials fail?

Many programs enter the clinic with strong preclinical logic and still collapse.

Across therapeutic areas, only about 5–14% of drug candidates make it from Phase I to approval, meaning around 90% never reach market (MedX DRG).

That attrition is not just biology. It is also execution.

Why this topic matters ?

Clinical trials are slow, expensive, and exposed to compounding friction. A “small” process miss can trigger amendments, re-consents, retraining, and rework.Here is the uncomfortable part: later-stage failures increasingly include non-scientific drivers.

In an analysis of Phase II/III terminations, enrollment issues were cited in nearly 18% of terminations with reasons provided, while strategic/business decisions were ~36% (Nature Reviews Drug Discovery).

That is why operational discipline is not “admin.” It is core strategy.

Process mistakes harm outcomes in four ways:

- Patient safety: unclear procedures, drifting eligibility, late safety reporting

- Data quality: inconsistent endpoint capture, missing data, avoidable queries

- Timelines: IRB cycles, contract stalls, slow recruitment, delayed database lock

- Regulatory risk: weak rationale, poor documentation, audit findings

I have repeatedly seen trials stumble because people thought they agreed on the objective, but they did not. In one study I was close to, the sponsor aimed for a strong clinical signal while operations optimized for speed. Endpoints were interpreted differently across sites, data became inconsistent, and we were forced into an amendment and partial rework after dozens of patients were enrolled. It was not a science failure. It was a failure to align on what “success” meant before first patient in.

How to use this blog ?

Each mistake below includes what it looks like, why it happens, impact, and a prevention step.

Use it like a checklist during study planning, and like a diagnostic tool once the trial is live.

- Planning (objectives, feasibility, risk)

- Protocol design (endpoints, eligibility, bias, stats)

- Site start-up (IRB/ethics, contracts, training, documentation)

- Trial conduct (recruitment, retention, deviations, clinical reasoning)

- Data, monitoring, quality (EDC to database lock)

- Ethics and compliance (GCP-ready, audit-ready)

Common mistakes in the Planning stage

Planning mistakes create downstream certainty. When assumptions are wrong at this stage, teams pay later through amendments, delays, and noisy or unusable data.

Mistake 1: Poorly defined trial objectives not aligned with regulators

Teams often write objectives that sound scientific but do not support a real regulatory or clinical decision. Decision-focused objectives are different from exploratory curiosity.In practice, this shows up as objectives that do not clearly map to a regulatory or clinical decision, heavy use of vague “we will explore…” language, and endpoints or analyses that cannot actually support the claim the team wants to make. These issues usually arise because teams prioritize speed or publication goals, regulatory thinking enters too late, and operations is not asked whether objectives are feasible to execute consistently across sites.The impact is significant: studies fail to hold up during regulatory interactions, protocols require amendments to “tighten” objectives, and sites collect endpoints inconsistently because they are unclear on what truly matters.Prevention: Sanity-check against ICH E6(R3) Good Clinical Practice principles and region expectations early (FDA/EU). Use the GUIDELINE FOR GOOD CLINICAL PRACTICE E6(R3) as a shared baseline. Hold a 60-minute objective alignment review with clinical, ops, data, biostats, and regulatory.

Mistake 2: Optimistic feasibility assessments and enrollment math

Feasibility is where timelines live or die.

And most teams still do feasibility using the wrong inputs.Recruitment delays are blamed for trial delays in about 80% of studies, and in many datasets ~80% of trials fail to hit targeted enrollment within the planned timeframe (Gitnux).

Even worse, ~48% of trial sites under-enroll, and ~85% of trials fail to retain enough participants without extensions (Gitnux).This usually looks like sites quoting prevalence instead of validating eligible charts, ignoring competing trials at the same institution, underestimating visit burden, and having no backup plan if the first few sites miss targets. The root causes are leadership pressure to commit to aggressive timelines, feasibility calls conducted only with investigators (not coordinators), and teams avoiding hard questions to preserve momentum.In real feasibility calls, sites often promise optimistic numbers without pushback. Once live, coordinators struggle, referrals drop, screening logs weaken, and dashboards stay “green” because averages hide site-level failure. By the time reality sets in, months are lost and the default rescue is “add more sites.”The impact includes under-enrollment, extensions, higher cost per patient, lower team morale, and selection bias as sites push borderline patients.Prevention: Requires chart-based validation of inclusion/exclusion criteria, explicit documentation of competing trials, coordinator-led scoring of visit burden (time, staffing, workflow, patient travel), and building a backup recruitment plan before first patient in.Early warning signals during planning include how many sites actually validated criteria against charts, how often core assumptions change week to week, and vague or inconsistent answers to where patients will realistically come from.

Mistake 3: Ignoring the biggest barrier - Site capacity and friction

The biggest barrier to clinical trials is often not patients but site capacity: contracting speed, staffing turnover, clinic workflow, and competing priorities.This mistake appears when PI enthusiasm is treated as readiness, activation plans assume hospital legal and finance teams move quickly, and monitoring or data cleaning resources are under-budgeted. The result is start-up stalls, missed enrollment windows, slow query turnaround, declining retention due to poor patient experience, and growing risk at database lock.

Prevention: Means building a most-likely plan rather than a best-case scenario, budgeting realistically for monitoring and proactive data cleaning, and adding contingency sites early instead of waiting for panic.

1. Common mistakes in Protocol Design

Mistake 1 : Having Vague Primary or Secondary Endpoints

One common mistake is having vague primary or secondary endpoints and unclear estimands. Endpoints need to be measurable, consistent, and ready to support decision-making. When they are poorly defined, different sites end up interpreting them in their own way, which fragments the data and weakens the study.This usually shows up as endpoints that sound clinically meaningful but lack clear operational rules, multiple methods being used to measure the same outcome across sites, and missing data that was never anticipated - often because patients skip the most burdensome or complex assessments.These issues tend to arise because teams optimize protocols for publication language rather than operational clarity. Estimands and statistical assumptions are often not discussed early enough, and site coordinators or data managers - the people who understand what is actually feasible- are not consulted during protocol development.The impact can be significant. Sponsors may need to issue protocol amendments just to clarify how measurements should be taken. Missing data increases, conclusions become weaker, and regulators may push back with fundamental questions such as, “What exactly does this estimate represent?”

Prevention: To prevent these problems, endpoints should be fully operationalized by specifying who measures them, when they are measured, which instrument is used, and what qualifies as a valid assessment. Endpoints should align with both clinical meaning and standard-of-care workflows. Feasibility should be confirmed with coordinators and CRAs before the protocol is finalized, and overall design should remain aligned with ICH E9 principles, particularly around estimands and handling intercurrent events.

Mistake 2 : Overcomplicated protocol

Complex protocols often appear thorough on paper, but they frequently break down during execution. Excessive burden on sites and participants leads directly to protocol deviations, higher dropout rates, and slower enrollment.This typically shows up as long visit schedules filled with numerous “optional” procedures or exception cases, case report forms that are overly complex and poorly aligned with actual clinic workflows, and a pattern of many small deviations appearing early in the enrollment phase.These problems usually stem from scope creep driven by too many stakeholders, a mindset of “let’s collect it in case we need it,” and the absence of a pilot workflow review with site coordinators before finalizing the protocol.The impact is tangible and costly. Enrollment slows, participant retention drops, and data quality becomes inconsistent across sites. At the same time, monitoring efforts and data cleaning workloads increase sharply.Prevention: To prevent these issues, low-value data fields and procedures should be removed, visit schedules and window logic should be simplified, and a structured workflow review should be conducted with site coordinators and CRAs prior to protocol finalization.

Use eCRF best practices and cross-functional review, as described in eCRF Design: Best Practices

Mistake 3 : Unrealistic inclusion/exclusion criteria

Eligibility criteria ultimately define the reality of your recruitment. When those criteria are too narrow or unclear, screen failure rates rise quickly and recruitment becomes far more difficult than anticipated.This often becomes evident within the first few weeks, with high screen failure rates, frequent site-by-site debates over whether borderline patients qualify, and eligibility requirements that depend on data not routinely collected during standard clinical care.These issues usually arise because eligibility criteria are written without meaningful input from study coordinators, because the pursuit of a “clean” dataset takes priority over real-world feasibility, and because teams assume physicians will refer patients in a perfectly aligned and consistent way.The consequences are serious. Timelines are extended, expensive rescue strategies become necessary, selection bias can emerge as sites begin cherry-picking easier-to-qualify patients, and additional protocol amendments and retraining efforts are required.Tufts research noted that modifying eligibility criteria due to design changes or recruitment difficulty is a common driver of amendments, and nearly one-third to nearly half of amendments could have been avoided with better upfront planning (Springer / Tufts).

.png)

2. Common mistakes in Site Start-up

Mistake: Underestimating IRB/ethics review complexity

A common site start-up mistake is underestimating the time and complexity of Institutional Review Board (IRB) or ethics committee review. IRB delays are structural rather than exceptional, often arising because protocols are written for scientific audiences rather than for ethics review, which prioritizes participant protection and operational clarity.This typically results in repeated IRB queries, post-approval amendments that reset review timelines, and consent forms that are overly technical or unclear. Missing operational details—such as recruitment processes, data handling, and safety follow-up—further compound delays. In practice, these issues can add approximately two to four months to study timelines, with sponsor costs continuing to accrue during this period.Delays can be reduced by using an IRB-ready submission checklist, simplifying consent language, and including a concise operational detail package covering recruitment, data flow, and safety reporting. Pre-review by experienced regulatory staff and alignment with established guidance, such as the MRCT Center guidance on IRB/EC considerations for DCT review

Mistake : Starting contracts and budgets too late

Starting contract and budget negotiations too late is another critical error in site start-up. Contracting is often treated as an administrative step rather than a core path activity, resulting in situations where IRB approval is achieved but site activation remains blocked.Common signs include prolonged budget negotiations, line-by-line contract disputes, and clinical trial agreements stalled in legal review queues. Based on field experience, these delays can extend site activation by two to five months per site.To mitigate this risk, contract and budget development should begin early and proceed in parallel with protocol finalization. Standardized templates, predefined fallback language, and active tracking of stalled items (“time untouched”) help maintain momentum and surface issues early.

Mistake: Incomplete IRB submissions and missing operational details

Incomplete IRB submissions frequently lead to rework and loss of start-up momentum. These gaps increase the likelihood of delayed first patient in (FPI) and raise the risk of protocol changes requiring re-consent after study initiation.Typical deficiencies include misaligned recruitment materials and consent forms, unclear data privacy language, undocumented safety reporting workflows, and undefined training plans. These inconsistencies often result in uneven implementation across sites and additional IRB queries.Preventive measures include using a comprehensive submission package checklist and ensuring alignment across recruitment, data handling, safety reporting, and training plans prior to submission. Referencing established frameworks, such as the World Health Organization’s clinical trial best practice guidance, supports completeness and consistency. [ WHO Guidance for best practices for clinical trials ]

Mistake: Documentation chaos

Documentation issues are often underestimated until audits or inspections expose their impact. Poor version control and fragmented communication systems make it difficult to identify authoritative documents or track decision history.This commonly appears as multiple versions of protocols and manuals, undocumented changes, and documents that are not maintained after initial creation. The consequences include protocol deviations, inconsistent data capture, audit findings, and retraining requirements.These risks can be reduced by establishing a single source of truth for essential documents, assigning clear ownership and versioning rules, and maintaining a decision log that records key changes and their rationale. While errors in clinical research are inevitable, robust documentation systems determine whether they are identified early or escalate into compliance issues. Practical resource for the human side of these mistakes is the discussion on are mistakes inevitable in clinical research, especially with data entry?.

3. Common mistakes during Trial Conduct

Mistake: No real recruitment workflow

Recruitment failures are often workflow failures rather than motivation problems. When recruitment processes are poorly designed, reminders and pressure do little to improve outcomes. A recurring real-world pattern is seen in multicenter Phase II cardiology trials requiring enrollment shortly after hospital discharge. Although feasibility may appear strong on paper, protocols are sometimes finalized before coordinators and discharge planners are involved. As a result, patients are discharged within narrow time windows, and research teams are notified too late to screen and consent, leading to stalled enrollment.

In practice, correcting the workflow , rather than increasing pressure drives improvement. Effective fixes have included allowing pre-consent during inpatient stays, embedding screening steps into discharge workflows, granting coordinators standing permission to pre-screen electronic health records, and retraining sites using clear, step-by-step enrollment playbooks. When paired with expedited IRB review for operational amendments, these changes have led to rapid enrollment recovery, often tripling enrollment within weeks and avoiding the need to add sites or extend timelines.

Mistake: Enrollment pushed over enrollment quality

Another common mistake is prioritizing enrollment speed at the expense of eligibility quality. Rapid enrollment that compromises inclusion criteria does not represent success; it introduces hidden bias and downstream rework. This issue typically appears as rising screen failure rates, inconsistent eligibility decisions across sites, and overly flexible interpretations of criteria.

These problems often stem from pressure to meet enrollment targets, unclear eligibility definitions, and insufficient coordinator training. The consequences include wasted screening costs, increased safety risk, selection bias, and weaker endpoint credibility. Preventive measures include weekly reviews of screen failures with documented root causes, the use of eligibility decision trees for edge cases, and early retraining before inconsistent practices become embedded.

Errors in clinical reasoning (at sites and sponsor level)

Errors in clinical reasoning can distort both eligibility assessment and safety reporting, leading to inconsistent study populations across sites. Common cognitive pitfalls include anchoring on an initial diagnosis, confirmation bias during eligibility assessment, failure to consider competing causes of symptoms, and over-reliance on clinician referrals.Reducing these errors requires structured screening checklists linked directly to protocol criteria, standardized definitions in manuals and training, and secondary review for borderline cases involving the principal investigator, coordinator, or medical monitor. These safeguards help ensure consistency and protect study integrity.

What can go wrong in Clinical Trials: Deviations, Retention drops, Safety reporting delays

Many clinical trial failures present early warning signs if monitored consistently. Deviations, retention issues, and safety reporting delays rarely emerge suddenly; they typically develop gradually and can be detected through routine tracking. Common issues include missed or out-of-window visits, dosing errors, delayed or incomplete adverse event reporting, and declining retention after burdensome study visits.Effective oversight includes weekly review of deviation trends by site and category, monitoring delays between visits and data entry, tracking site responsiveness, and analyzing retention by visit complexity. For additional lived experience from CRAs, the thread on “What’s the worst mistake you’ve made as a CRA?” highlights a consistent theme: small misses become big when documentation and escalation are weak.

Common mistakes in Data, Monitoring, & Quality

Data quality does not start at database lock.It starts when a coordinator reads the CRF and tries to map it to real care.

Mistake: Inconsistent Data Capture and CRFs Misaligned With Site Reality

Inconsistent data capture often arises when case report forms (CRFs) do not reflect how work is actually performed at study sites. When CRFs fail to align with site workflows, coordinators are forced to improvise, leading to variability in how fields are interpreted and completed across sites. This commonly appears as repeated queries on seemingly straightforward data points and source data that does not map cleanly to the electronic data capture (EDC) system.

These issues typically occur when CRFs are designed without sufficient coordinator input, data definitions are unclear or incomplete, and training emphasizes theoretical understanding rather than practical examples. The resulting impact includes longer data cleaning cycles, increased missing or inconsistent data, delayed database lock, and reduced interpretability of study endpoints. Preventive measures include structured CRF review with site coordinators before finalization, clear data definitions with completion examples in study manuals, and interdepartmental review and quality checks in line with established eCRF design best practices.[ eCRF Design: Best Practices ]

Mistake: Delayed Data Cleaning and Lack of Real-Time Monitoring

Delaying data cleaning until late in the study increases cost and complexity, as sites are often required to reconstruct clinical context months after visits occur. This pattern is frequently characterized by growing query backlogs, reliance on an end-of-study “cleaning sprint,” and the late discovery of missing or unusable data.

The consequences include delayed database lock, extended trial operations, increased cost, and a higher risk that endpoints become difficult or impossible to interpret. Early warning signals include rising weekly query volumes, repeated query types, emerging patterns of missing data across sites, and increasing time from visit completion to data entry. Effective prevention requires establishing a regular data review rhythm with clear ownership, implementing risk-based monitoring with centralized dashboards, and using trigger-based escalation when predefined thresholds are crossed.

Mistake: Misalignment Between Statistical Analysis Plans and Protocol Reality

Misalignment between the statistical analysis plan (SAP) and the protocol introduces both regulatory risk and internal friction, particularly when timelines are compressed. This issue often becomes visible when analysis assumptions are undermined by missing data, protocol deviations, or visit window variability, leading to unplanned post hoc sensitivity analyses and uncertainty about whether the study can answer its original research question.

The impact includes reduced confidence in results, regulatory scrutiny, additional analysis requests, and delays in reporting and submission readiness. These risks can be mitigated by maintaining close alignment between protocol design and the SAP, planning for missing data scenarios early, and defining appropriate sensitivity analyses in advance. Analysis strategies should remain consistent with Good Clinical Practice and ICH principles without unnecessary complexity.

Common Ethical and Compliance Mistakes

Ethics and compliance are not “paperwork.”They are how you protect participants and make results credible.Ethics issues cause delays because IRBs ask the right questions.

If you do not answer them upfront, you will answer them later under time pressure.Core issues to address clearly:

- Informed consent clarity and readability

- Minimizing burden and making procedures reasonable

- Fair participant selection and inclusion rationale

- Privacy and data handling, especially with remote tools

- Safety monitoring and who pays for injury or extra care (as applicable)Missing detail here often triggers IRB queries and can force re-consent later.

- If your study includes decentralized elements, use the MRCT Center DCT IRB/EC considerations to pre-empt predictable concerns: people, data integrity/security, and oversight.

Are pregnant women usually included in clinical trials for new drugs?

Often, pregnant women are excluded in early drug trialsThe main reason is fetal risk and unknown safety, especially when early human safety data is limited.Later in development, inclusion may happen with strict safeguards.This can include enhanced monitoring, specialized consent language, and pregnancy exposure registries. The key is to plan the ethics and safety monitoring approach early, because it influences eligibility, consent, and AE reporting.

Mistake: Late Compliance Checks

Reactive compliance creates avoidable findings. If you only fix issues after audits or monitoring visits, you are already behind. This often shows up as workarounds becoming “normal,” essential documents missing or expired in the eTMF, training drifting over time (especially after staff turnover), and CAPAs being opened but not properly closed. As an experienced research professional noted, clinical trials depend on accurate, complete, and compliant documentation, yet even small errors can cause delays, compliance problems, or even study failure. Common pitfalls include incomplete or inconsistent source documentation, poor management of essential documents in the eTMF, and incorrect or missing delegation of authority logs (DOA). The solution is proactive systems such as checklists, ongoing training, automated reminders, regular QC audits, and real-time document updates. Prevention requires routine QC and TMF completeness checks, weekly CAPA aging reviews with clear escalation rules, training refreshers linked to deviation and query trends, and strong version control with enforced document ownership.

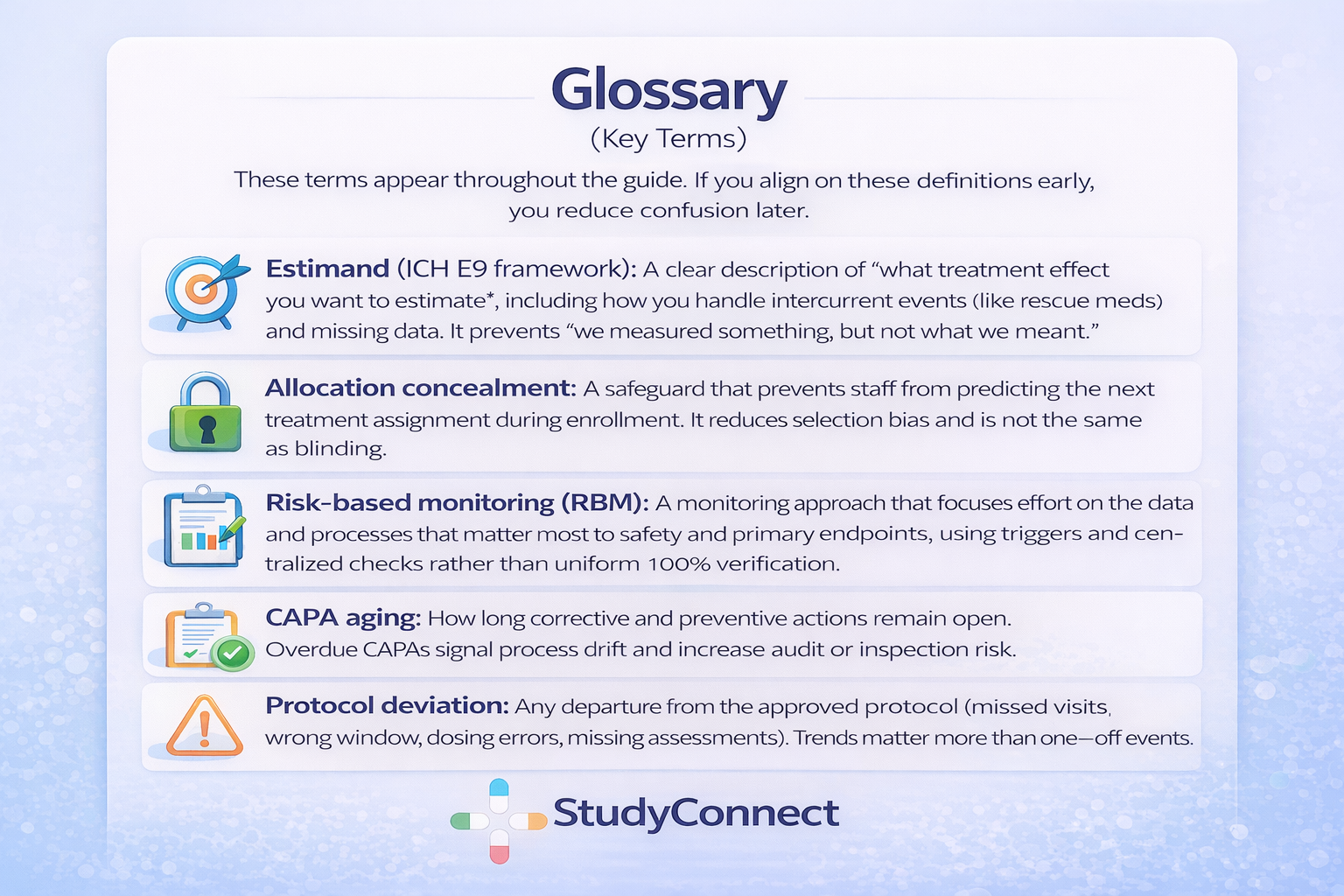

What is CAPA Aging ?

CAPA aging is the time a corrective or preventive action stays open. It is a simple metric, but auditors care because it reflects whether you truly control your process.Overdue CAPAs usually signal one of three issues:

- The root cause was not understood, so the fix is unclear

- Ownership is weak, so actions do not get completed

- The organization tolerates drift and relies on heroicsA weekly CAPA aging review is one of the fastest ways to stay inspection-ready.

- It turns compliance from a reaction into a system.

Device Trials Callout: What Changes Under ISO 14155 and EU MDR

Device trials come with additional regulatory expectations that drug-focused teams often underestimate. If you are running a device study or a drug–device combination trial, these requirements need to be planned from the outset, not added later.In practice, this means a stronger and more explicit linkage between the clinical investigation and the device risk management file, including clear benefit–risk justification. Device accountability and traceability become critical, along with documented training on correct device use. Usability and human factors considerations must be integrated, especially where they directly affect safety or clinical outcomes.Device trials also carry distinct vigilance reporting expectations and timelines, as well as alignment with the EU MDR clinical evaluation framework and evidence planning requirements. All of this must be consistent with ISO 14155 principles for clinical investigation of medical devices.This is not about increasing paperwork. It is about anticipating regulatory expectations early and avoiding predictable friction during reviews, audits, and inspections later on.

How to improve clinical trials: a stage-by-stage prevention system

A prevention system beats a rescue plan. The easiest time to save 3–6 months is before the first patient is enrolled.

Weekly early warning dashboard (signals by stage)

Most teams track milestones. Few track friction.

A weekly dashboard should show latency, churn, and quality signals.Copy-ready template (simple version):Planning

- Sites validated I/E against charts:

# validated / # targeted - Competing trials documented:

yes/noby site - Assumption changes this week:

count + descriptionProtocol - Revision churn:

# major changes this week - Ops feedback share:

% of comments from coordinators/CRAs/data - “TBD” items open:

countStart-up - Contract “time untouched” (median days)

- Budget “time untouched” (median days)

- IRB query cycles per site (count)

- Speed variance: fastest vs slowest site (days)Conduct

- Screen failure rate trend (weekly)

- Enrollment vs plan (by site)

- Deviations per subject and per site

- Data entry lag (days from visit to entry)Data

- Query volume trend (weekly)

- Repetitive queries (top 5 fields)

- Missing data patterns (top variables)Compliance

- CAPA aging (median days open; overdue count)

- TMF completeness % (critical documents)

- Training completion and refresh statusThis comes straight from a practical idea: track “latency” everywhere.

- When response times stretch week over week, delays are already forming.

Why do 90% of clinical trials fail? The practical view

The “90% fail” statistic is real in the drug development funnel.Industry-wide, roughly 90% of drug candidates entering clinical testing never reach approval, with phase-specific failure rates often cited as ~30–40% in Phase I, ~70–80% in Phase II, and ~40–50% in Phase III before regulatory review (MedX DRG).But the practical reasons are often preventable:

- Recruitment shortfalls and retention problems (common and persistent)

- Operational burden and workflow mismatch

- Endpoints that are not measurable consistently

- Data quality issues and late cleaning

- Funding/time overruns

- Ethics and regulatory friction due to missing details

- Strategic/business reprioritization (often tied to weak execution signals)Remember the termination data: enrollment issues cited in ~18% of Phase II/III terminations with reasons provided, and strategic/business decisions at ~36% (Nature Reviews Drug Discovery).

- Process and planning matter because they shape those decisions.

What are the Steps in Conducting Clinical Trials for New Drugs

Clinical trials follow a familiar path. The same mistakes show up at predictable points.

- Planning / feasibility - Mistakes: optimistic enrollment math, no backup plan, unclear objectives

- Protocol + SAP design - Mistakes: vague endpoints, unclear estimands, overly complex schedules, narrow I/E

- Site selection + IRB/ethics + contracts - Mistakes: incomplete submissions, late contracting, IRB cycles not planned

- Site activation (training, EDC access, materials) - Mistakes: IRB approved but not activation-ready, training drift begins

- Enrollment + conduct - Mistakes: no recruitment workflow, screen failures rising, protocol drift

- Monitoring + quality management - Mistakes: slow issue escalation, weak RBM triggers, repeated deviations

- Data cleaning + database lock - Mistakes: delayed cleaning, query backlogs, systemic missingness

- Analysis + reporting - Mistakes: SAP misaligned to protocol reality, weak sensitivity planningIf you want a deeper list of design pitfalls, the article on Top Five Mistakes in Clinical Protocol Design covers common failure patterns like too many objectives and missing interdisciplinary input. Use it as a cross-check, not as a template.

How StudyConnect can reduce preventable mistakes

Many avoidable trial mistakes come from building in a silo.Teams make assumptions about clinics, coordinators, endpoints, and recruitment flow without fast access to the right people.StudyConnect helps teams talk to verified clinicians, researchers, and trial-experienced experts early, so feasibility and workflow are tested before they become protocol text.

The practical value is reducing guesswork in:

- Feasibility assumptions (chart reality, patient flow, competing trials)

- Endpoint measurability (what can be captured consistently)

- Real workflow design (screening, consent, discharge timing, retention)

- Early compliance readiness (documentation expectations and common gaps)This is not about replacing CROs or IRBs.

- It is about reducing preventable surprises before they cost months.

Get in touch with the team at StudyConnect at matthew@studyconnect.world to help you connect with the right medical advisor.

Copy-paste Checklists (Downloadable) Before you Launch

What can go wrong in clinical trials and how can clinical trials be improved?

Most problems are predictable: recruitment shortfalls, IRB cycles, contract stalls, deviations, missing data, and late compliance fixes.

Improvement levers are also predictable: realistic feasibility, IRB-ready protocols, early contracting, workflow-first recruitment, real-time data oversight, and proactive compliance.If you want a broad research-oriented list of common research mistakes, see Fifteen common mistakes encountered in clinical research.

For a compliance-oriented perspective, the video 5 Clinical Trial Mistakes That Can Ruin Your Reputation is a useful reminder that noncompliance findings are not rare, and reputational damage is real